For Volta and previous versions of cuDNN, the default math option continues to be FP32. The library internally selects TF32 convolution kernels if they exist when operating on 32-bit data. With version 8.0 and greater, convolution operations are performed with TF32 Tensor Cores when you use the default math mode CUDNN_DEFAULT_MATH or specify the math type as CUDNN_TENSOR_OP_MATH. Convolutional layers in cuDNN have descriptors that describe the operation to be performed, such as the math type. cuDNNĬuDNN is the deep neural network library primarily used for convolution operations. In this post, we discuss the various considerations for enabling Tensor Cores in NVIDIA libraries. This includes convolutions in cuDNN, matrix multiplies in cuBLAS, factorizations and dense linear solvers in cuSOLVER, and tensor contractions in cuTENSOR. For developersĪcross the NVIDIA libraries, you see Tensor Core acceleration for the full range of precisions available on A100, including FP16, BF16, and TF32. Everything remains in FP32, or whichever format is specified in the script. Tensor storage is not changed when training with TF32. TF32 also does not apply to layers that are not convolution or matrix-multiply operations (for example, batch normalization), as well as optimizer or solver operations. TF32 does not accelerate layers that operate on non-FP32 tensors, such as 16-bits, FP64, or integer precisions. TF32 mode accelerates single-precision convolution and matrix-multiply layers, including linear and fully connected layers, recurrent cells, and attention blocks. Deep learning researchers can use the framework repositories and containers listed earlier to train single-precision models with benefits from TF32 Tensor Cores. TF32 is also enabled by default for A100 in framework repositories starting with PyTorch 1.7, TensorFlow 2.4, as well as nightly builds for MXNet 1.8. TF32 is the default mode for AI on A100 when using the NVIDIA optimized deep learning framework containers for TensorFlow, PyTorch, and MXNet, starting with the 20.06 versions available at NGC. In this section, we summarize everything that you must know to accelerate deep learning workloads with TF32 Tensor Cores.

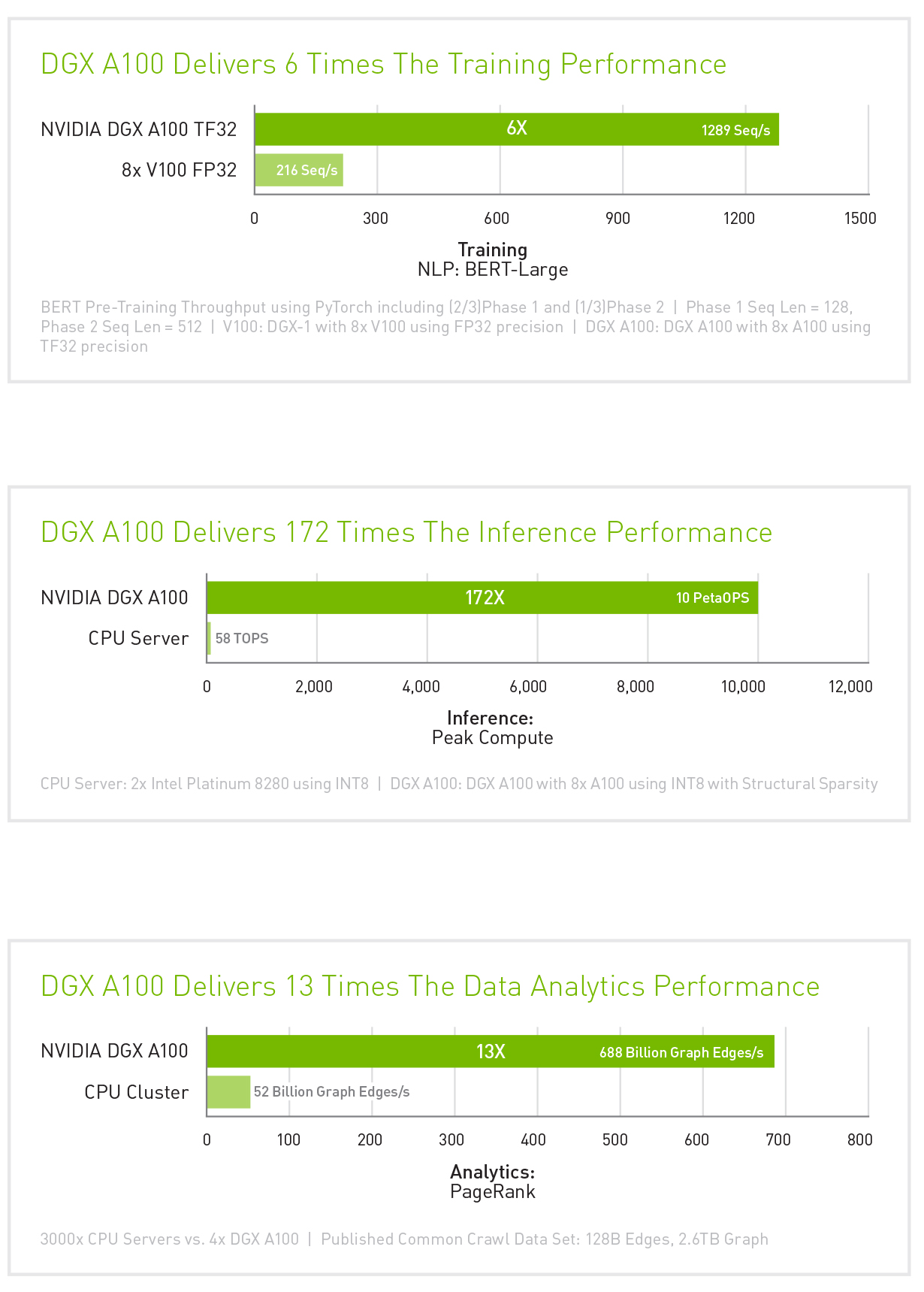

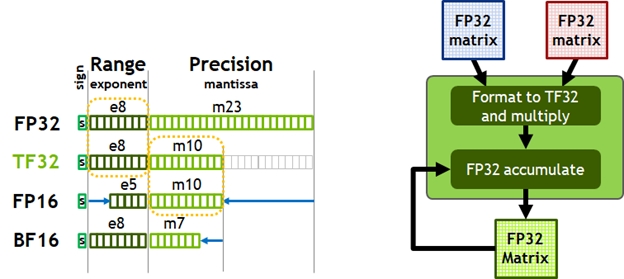

A100 speedups over V100 FP32 for PyTorch, TensorFlow or MXNet using NGC containers 20.08 and 20.11 with models from NVIDIA Deep Learning Examples. Thus, TF32 is a great starting point for models trained in FP32 on Volta or other processors, while mixed-precision training is the option to maximize training speed on A100.įigure 5. Furthermore, switching to mixed precision with FP16 gives a further speedup of up to ~2x, as 16-bit Tensor Cores are 2x faster than TF32 mode and memory traffic is reduced by accessing half the bytes. However, speedups observed for networks in practice vary, since all memory accesses remain FP32 and TF32 mode doesn’t affect layers that are not convolutions or matrix multiplies.įigure 5 shows that speedups of 2-6x are observed in practice for single-precision training of various workloads when moving from V100 to A100. Training speedupsĪs shown earlier, TF32 math mode, the default for single-precision DL training on the Ampere generation of GPUs, achieves the same accuracy as FP32 training, requires no changes to hyperparameters for training scripts, and provides an out-of-the-box 10X faster “tensor math” (convolutions and matrix multiplies) than single-precision math on Volta GPUs. From left to right: ResNet50, Mask R-CNN, Vaswani Transformer, Transformer-XL. Accuracy values throughout training in FP32 (black) and TF32 (green) for various AI workloads. Dot product computation, which forms the building block for both matrix multiplies and convolutions, rounds FP32 inputs to TF32, computes the products without loss of precision, then accumulates those products into an FP32 output (Figure 1).įigure 4. TF32 is a new compute mode added to Tensor Cores in the Ampere generation of GPU architecture.

It’s also worth pointing out that for single-precision training, the A100 delivers 10x higher math throughput than the previous generation training GPU, V100. Table 1 shows the math throughput of A100 Tensor Cores, compared to FP32 CUDA cores. Mixed-precision training with a native 16-bit format (FP16/BF16) is still the fastest option, requiring just a few lines of code in model scripts. It brings Tensor Core acceleration to single-precision DL workloads, without needing any changes to model scripts. TF32 mode is the default option for AI training with 32-bit variables on Ampere GPU architecture. NVIDIA Ampere GPU architecture introduced the third generation of Tensor Cores, with the new TensorFloat32 (TF32) mode for accelerating FP32 convolutions and matrix multiplications.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed